We proudly announce that the Journal of the Acoustical Society has just published our proposition of the equatorial microphone array (EMA): DOI: 10.1121/10.0005754 [ pdf ]

The EMA is essentially a spherical microphone array (SMA) but with microphones only along the equator. Our JASA article demonstrates how a spherical harmonic decomposition can be obtained from the EMA signals. We have focused on binaural rendering of the signals so far.

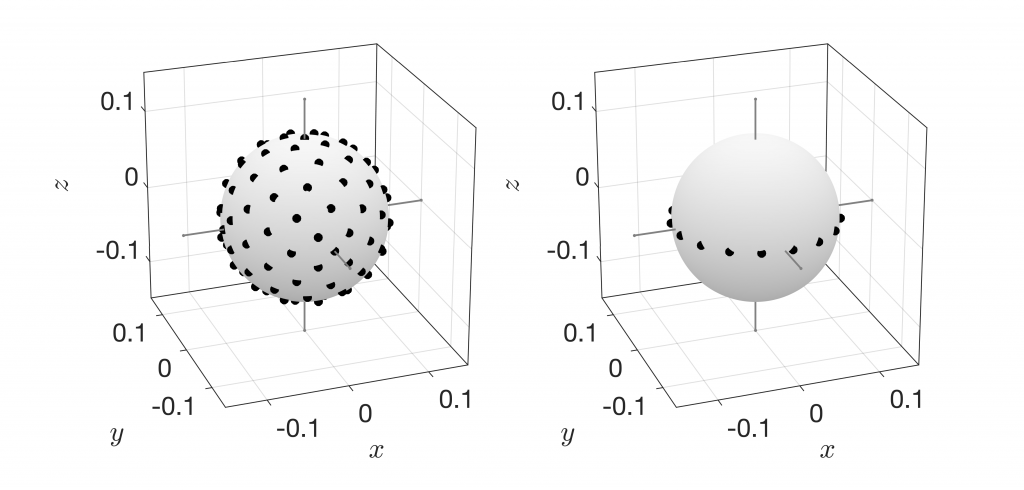

The main advantage of the EMA is that it requires way fewer microphones than SMA for the same spherical harmonic order. Here’s an 8th-order SMA on the left image that employs 110 microphones on a Lebedev grid (you can get away with 81 microphones on a quasi uniform grid, too) and an 8th-order EMA with 17 microphones on the right:

The EMA clearly has a few limitations. Particularly, it cannot preserve monoaural elevation cues as it can in principle not capture elevation information. Elevated sound incidence will cause a distortion of the magnitude of the binaural output signals by a few dB. Interestingly, the EMA can actually preserve ILD and ITD even for elevated sources. It was shown in different experiments that this typically also leads to the perception of elevation. Otherwise, there are no limitations for the EMA. Close and far sources and the like work just as well as with SMAs.

Here are a few simple audio examples: https://doi.org/10.5281/zenodo.4805266 Please note that they are free-field simulations. So, they might sound a little boring… (UPDATE: Here‘s a live demo of the EMA)

Last but not least: We are not aware that it has been proven that SMAs are actually capable of preserving monaural elevation information. This not surprising given that spatial aliasing typically occurs in the frequency range where most monaural HRTF elevation cues are located. It could therefore be that even SMAs are not really capable of overcoming the limitations of EMAs. Be sure that we will follow up on this. We’re currently building a prototype.

Thanks to Facebook Reality Labs Research for funding our work on the EMA!