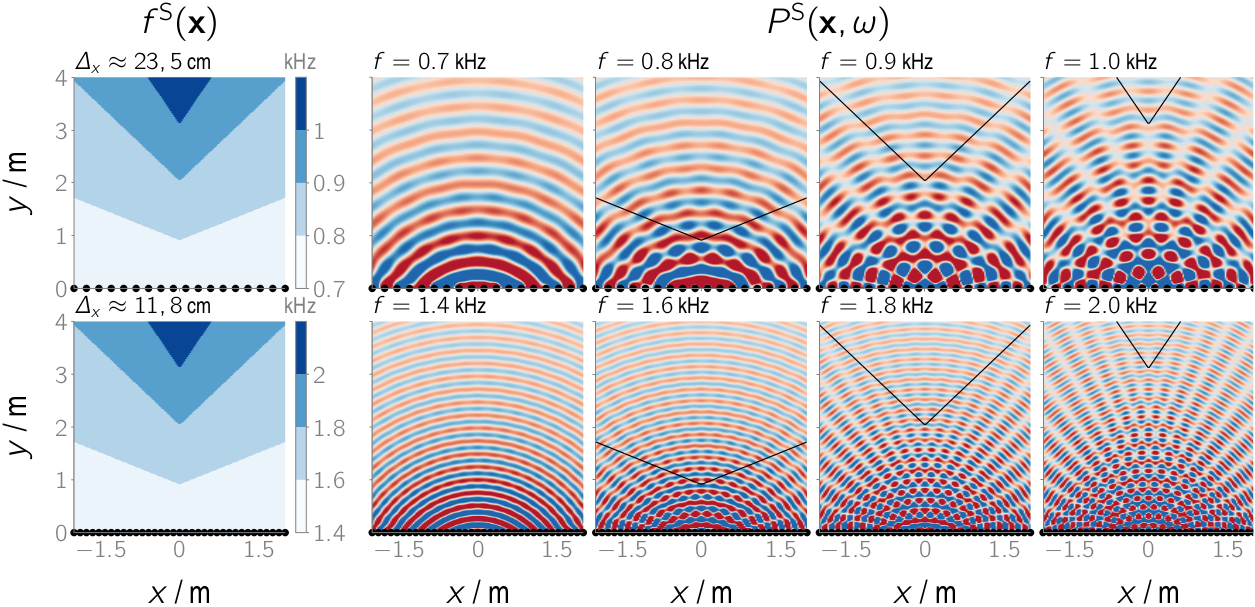

On the 45th Annual German Acoustic Conference (DAGA) we presented further thoughts on the links between NFC-HOA and WFS. See the accompanying github repository for the manuscript, slides and extended calculus.

Schultz, F; Firtha, G.; Winter, F.; Spors, S. (2019): “On the Connections of High-Frequency Approximated Ambisonics and Wave Field Synthesis.” In: Proc. of the 45th DAGA, Rostock, p. 1446-1449.

Schultz, F; Firtha, G.; Winter, F.; Spors, S. (2019): “On the Connections of High-Frequency Approximated Ambisonics and Wave Field Synthesis.” In: Proc. of the 45th DAGA, Rostock, p. 1446-1449.

Subscribe

Software

Related BLOGs

Tags

acoustic focusing Android Audio Engineering Society audio examples Auditory Modeling auditory models beamforming Binaural Model Binaural Room Transfer Function Binaural Synthesis Evaluation focused sources Head-Related Transfer Functions head-relatet impulse responses Higher-Order Ambisonics Interaction linux Localization local sound field synthesis Local Wave Field Synthesis loudspeaker array microphone array modal-beamforming Octave Perception perceptual assessment python Reproducible Research SFS Toolbox SOFA Sound Field Synthesis Sound Figures SoundScape Renderer Spatial Sampling spectral division method spherical acoustics spherical microphone array system identification time-reversal tips & tricks translatory head-movements tutorial two!ears virtual acoustics Wave Field SynthesisCategories